Echarts绘制北京摩拜单车分布热力图

2018-10-21 01:04:48

爬虫

最近在爬一些数据,看到了知乎上这篇爬虫爬取摩拜单车位置生成热力图文章还挺有意思。

把那篇文章的java代码转成python如下,通过以下代码就可以抓取到指定地理位置附近的摩拜单车数量。

1 | from __future__ import print_function |

摩拜单车返回的接口数据格式如下:

1 | { |

前端

前端这里面我使用了Echarts的热力图,它接受的参数为一个列表,这里每个列表是一个三元组,代表经度、纬度、权重。这里面每行都代表一辆摩拜单车,所以权重都是1.

1 | [ |

代码见:http://nladuo.github.io/beijing-heatmap/

效果

一共16万单车的数据,显示起来非常卡,真正做的话感觉还要聚类一下减少一下点的数量。还有就是感觉定位不是那么准。

使用聚类减少点数目

上面一共显示了16万个点,实在太多了,前端十分卡顿。这里可以使用Kmeans,把16万个点聚类成1万个簇。每个簇也就是原来的一个点,在簇上加上权重,权重代表有多少个点属于这个簇。

下面使用scikit-learn完成聚类。

1 | from __future__ import print_function |

相比之前,数据变成了下面的样子。

1 | [ |

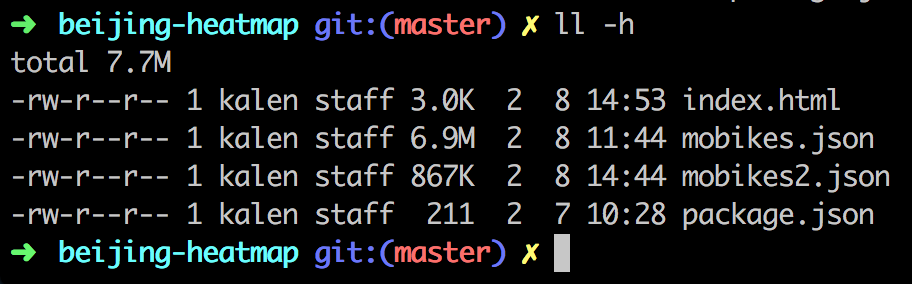

从6.9M变成了867K,不过也损失了一部分精度。

ofo小黄车是什么样的?

按照上面的思路,也可以再做个小黄车的分布图,这里面爬下来5万多的数据,效果如下。